Most data infrastructure in crypto ultimately reads from an RPC node. This is a reasonable engineering choice that has become a security problem. RPC endpoints lag, drop logs, return inconsistent state across providers, and - as a recent $290M bridge incident illustrated - can be compromised outright. The common theme is that applications treating "whatever the RPC returned" as ground truth have delegated a trust decision without acknowledging one.

The machinery to verify an RPC's output is not missing. It is in every block header, and most data pipelines simply do not use it. This post walks through what is there, how SQD validates against it at ingestion, and - at the end - previews Snoopy, an experimental verification layer shipping in beta this week.

The block header is a commitment

On EVM chains, every header contains, among other fields:

- parentHash, hash of the previous header

- transactionsRoot, root of a Merkle Patricia Trie over the block's transactions

- receiptsRoot, root of an MPT over transaction receipts

- logsBloom, a 2048-bit Bloom filter over every log emitted in the block

The header is what consensus signs. Every other byte an RPC can hand you - transactions, receipts, logs - is trustworthy only to the extent it reproduces one of those commitments on recomputation.

Merkle Patricia Tries

The MPT is a radix trie keyed by 4-bit nibbles, where every internal reference is the keccak256 hash of an RLP-encoded node. The root transitively commits to every leaf, and two properties make it useful for data validation. First, any change to any leaf changes the root: swap, reorder, or alter a single byte in one transaction, and the root recomputed from the modified set will not match the header. Second, inclusion proofs are small - the path of nodes from root to leaf is enough to prove a specific transaction was in a block, which is how light clients and L2 bridge proofs work.

For ingestion, the first property carries the weight. Given a claim that a block contains a particular set of transactions, rebuilding the trie and comparing roots is definitive: a single missing or malformed transaction produces a mismatch.

State roots - which cover account balances and contract storage - require re-executing the EVM and are a separate concern. SQD does not recompute them; full nodes do. We verify the parts of the block we actually ingest.

Logs Bloom

The logsBloom is a 2048-bit filter: for every log, the EVM hashes the contract address and each indexed topic and sets three bits. Bloom filters have a useful asymmetry - false positives are possible, false negatives are not - but that is not how the filter is used here. At ingestion, the bloom is recomputed from ingested logs and byte-equality is required against the header. A missing log removes bits; a spurious log adds them. Either way, the 2048 bits differ. No probability is involved.

Log Indices

The bloom covers log content, not count or ordering. Log indices within a block are continuous from 0; a gap is proof of a missing log. Continuous-index validation is the deterministic complement to bloom equality - between the two, coverage of log correctness is complete.

The Chain Link

parentHash provides a structural guarantee. Every header commits to its predecessor's header, which commits to everything in the predecessor's block. One honest reference point is enough to verify any claim about subsequent blocks by checking continuity hash-by-hash. This is the cheapest check in the stack and the one most commonly skipped.

How SQD Applies This At Ingestion

The ingester (source: github.com/subsquid/data) differs from a standard pipeline in three structural ways.

Multiple independent endpoints. StandardDataSource in crates/data-source/src/standard.rs takes Vec<DataClient>. Endpoints poll independently; disagreements are resolved explicitly rather than by whichever response arrives first.

Parent-hash continuity on every block. accept_new_block() rejects any block whose parent_hash does not match the last committed block. Endpoints returning non-linking blocks have their error counter incremented and are benched on an exponential schedule: [0, 100, 200, 500, 1000, 2000, 5000, 10000] milliseconds. Misbehaving sources drop out of rotation automatically.

Forks by quorum. When an endpoint signals a reorg, the ingester does not immediately reorganize. A reorg is accepted only when more than half of endpoints report the same fork, all active endpoints report it, or a 2-second timeout elapses. The condition is in poll_next_event(). A single endpoint cannot unilaterally reorganize the chain.

On top of the structural checks, every ingested block is validated against its own header: transactions trie rebuilt and compared to transactionsRoot, logs bloom recomputed and compared to logsBloom, log indices required continuous, and failed transactions (status: 0) preserved rather than silently dropped.

Validated blocks are packed into compressed Parquet chunks. The chunk's storage path, from crates/archive/src/layout.rs, is {top}/{first_block}-{last_block}-{last_hash} - the final block's hash is part of the storage address, making the chunk a content-addressed commitment to a specific chain segment. Chunks are then replicated roughly 30× across 2,000+ worker nodes, each bonded with 100,000 SQD as slashable collateral.

At query time, the Portal fans out to multiple workers and cross-checks their outputs. Because chunks are deterministic Parquet, two honest workers executing the same query produce byte-identical outputs and matching output hashes; divergence is definitive.

Snoopy: Onchain Fraud Proofs (Beta)

The production path catches bad data at ingestion and cross-validates at query time. One residual case remains: a compromised worker that signs a bad result. The Portal catches the discrepancy at request time, but the rogue worker keeps its bond unless the violation is proven onchain.

Snoopy (github.com/subsquid/snoopy) is an experiment in closing that gap. It is permissionless - anyone can run an instance - and watches worker query logs in the background for responses that disagree with the consensus of their peers.

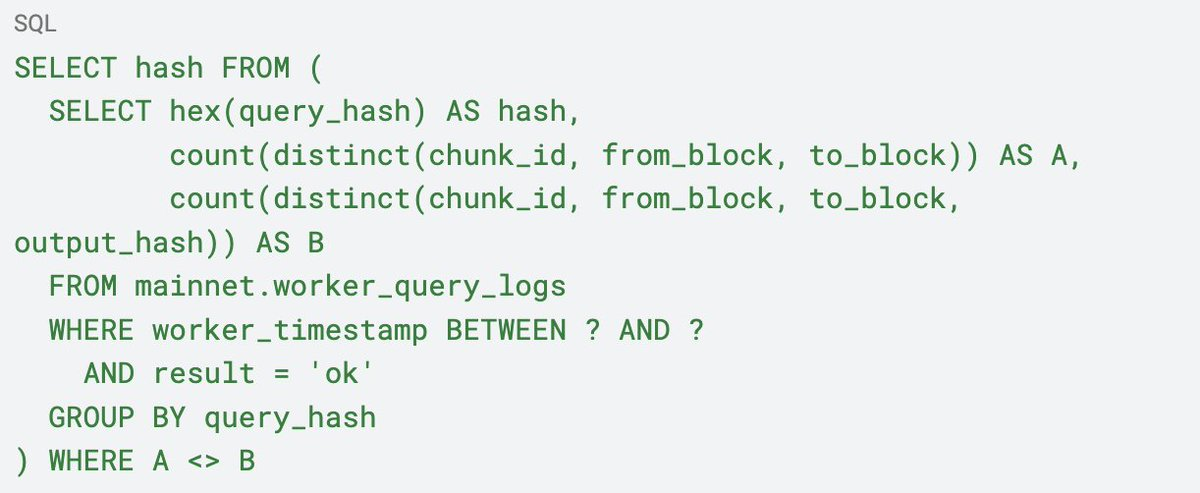

Detection reduces to a single SQL query:

When two workers execute the same query over the same chunk range and return different output hashes, B > A. Because chunks are deterministic, any divergence is a violation. For each flagged query, Snoopy identifies the minority group as rogue, collects at least five signed results from other workers provably assigned to that chunk - assignments are published as MPTs, and worker inclusion is proved with the same machinery as the transaction trie - and feeds the bundle into an SP1 zkVM program. The resulting groth16 proof is posted onchain via ProvingManager.verifyAndEmit, which triggers the slashing path.

A few caveats… Snoopy currently targets a Sepolia contract. The five-witness threshold is a parameter under active calibration. It is not the primary line of defense - the Portal's request-time cross-validation is, and will remain so. If Snoopy is offline, nothing breaks; if it is wrong, the onchain verifier rejects the proof. The beta exists to observe behavior against real traffic before anything downstream depends on it.

The direction is what makes it worth doing. Worker results were already signed, which made misbehavior attributable. Snoopy turns attribution into an onchain event that can be triggered by anyone, without trusting SQD - a non-trivial shift in what "decentralized data infrastructure" means in practice.

Summary

Block headers ship with enough cryptographic structure - trie roots, a bloom filter, a parent-hash link - to verify essentially everything an RPC returns. Most data pipelines skip this verification. SQD's ingester performs it at the point data enters the network, so downstream consumers inherit the guarantee. Snoopy extends that model from ingestion-time correctness to after-the-fact fraud proofs, and is live for testing this week.